Graduating Ph.D., Computer Science

About Me

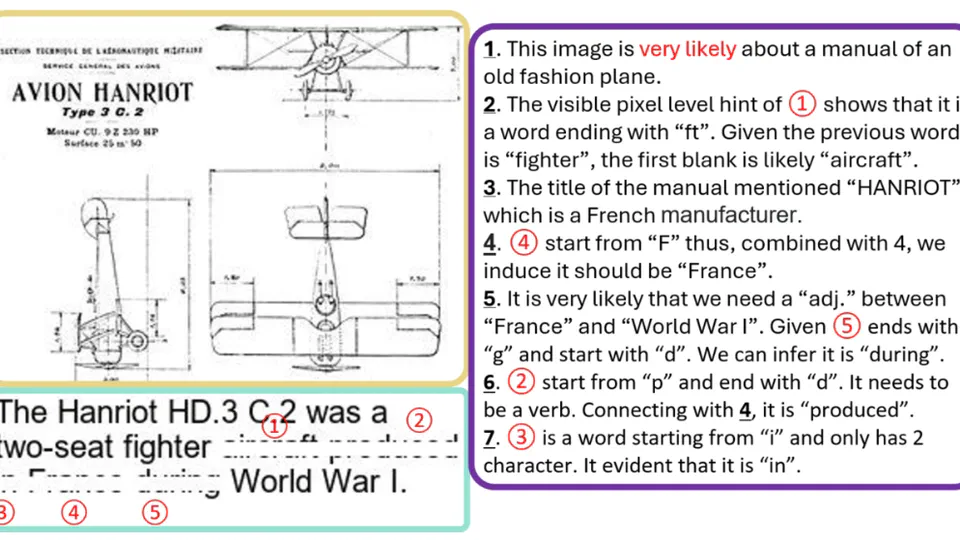

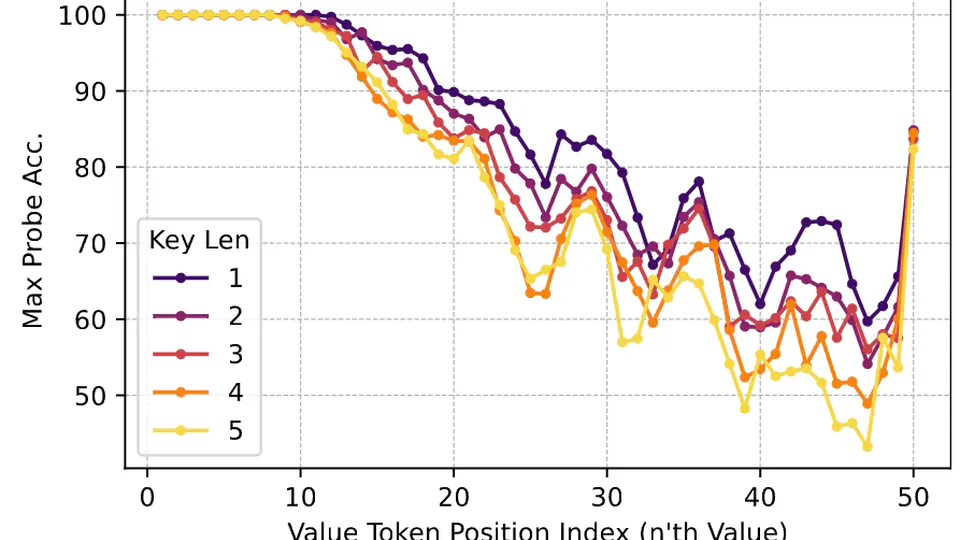

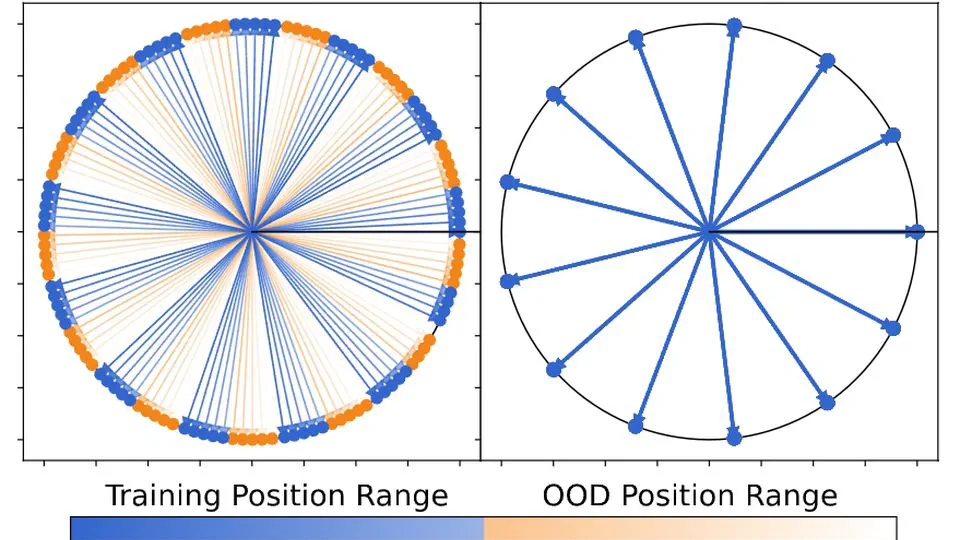

Suyuchen Wang is a graduating Ph.D. candidate at Mila, Quebec AI Institute and Université de Montréal, supervised by Bang Liu. His research spans the full stack of making language models more capable: from efficient long-context modeling (Resonance RoPE, ACL 2024) to retrieval-augmented reasoning (CARE, EMNLP 2025), and more recently vision-language understanding (VCR, ICLR 2025 co-first author with Yoshua Bengio).

He has published 20+ papers at venues including ICLR, EMNLP, ACL, NeurIPS, and The Web Conference, with open-source contributions including model checkpoints on HuggingFace and a Chrome extension for arXiv-to-Markdown conversion (⭐90+). He has held research positions at ServiceNow Research, Huawei Noah’s Ark Lab, and Tencent Jarvis Lab.

- Large Language Models

- Vision-Language Models

- Long-Context & Efficient Reasoning

- Retrieval-Augmented Generation

- Open-Source ML Tools

Ph.D., Computer Science

Mila - Quebec AI Institute / Université de Montréal

B.Eng. (Hons.), Computer Science

Beihang University